such as every 15 min last week, every week last year, every month from there on). keeping tiered versions in the past (e.g. Does not support "backup thinning" - i.e.

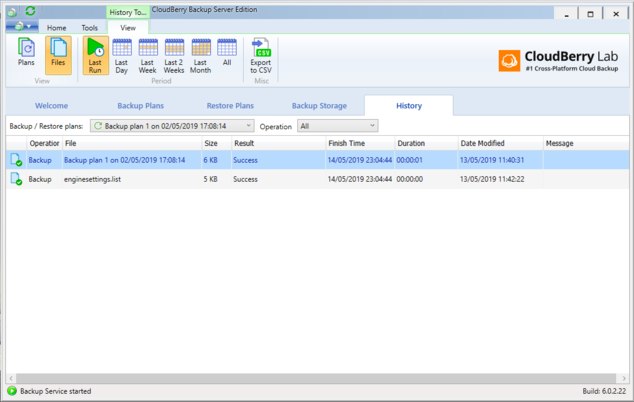

Cannot search for a file to restore - only browse the directory tree.Granted, same could be said about Crashplan - but Code42 has corporate products and customer and is a huge company, as opposed to small company in QBackup case. Unknown encryption implementation quality.Does not support Wassabi storage that is slightly cheaper than B2.It understand command line parameters so scheduling can be done via OS facilities (Cron/launchctl/Task Scheduler) Granted, it keeps local index and re-scans over 100k of files on Fusion drive in about 15-30 seconds - so it's not bad (it checks for timestamp and size - so if you modified file without changing timestamp or size it won't notice the changes). Which means it has to re-scan all files every time - it does not monitor the filesystem for changes. Crashplan where I went to town with regexps. Only glob format, partial matches, and and exact paths - which is OK for most uses, but not easy to migrate config from e.g. Does not support Regex for exclusions.Look and feel - very clean and polished product.The only program that I did not find bugs in yet and that seems to work fast and reliably (I'm only testing local backup for now - due to speed).During restore keeps your place in the directory while browsing different snapshots to choose which version to restore (big thing with me).Clean interface (I'm testing MacOS version).Way faster than others, especially Duplicati. Fairly easy to setup exclusions - by list of folders and pattern matching.I check from time to time to make sure my backups are what I want, restore, test, and verify, I have yet to have any issue.I'm trying it for a past few days, along with Arq, Cronosync, Duplicacy, Retrospect, GoodSync, Cloudberry, Duplicati, Duplcity. I get the most errors with those, but it's during peak use. The others, a few files here or there if it didn't backup for some reason, not the end of the world for me. The backups I really need 100% go off when I know nothing will fail and work stations are not in use. Since it's not a daily thing, or always the same number of files (most of the time it's 1 to 3) I'm assuming it was something someone was using remotely and I just ignore it and let it ride.Įdit: Just to clarify why I don't care about the errors I do get, I have to kickoff some backups during busy hours just because of bandwidth issues. I'm assuming it was in use, but the reports don't tell me what it is or why. The only semi-annoying thing with email alerts and even with shadow copy enabled, I from time to time get fails of some file that didn't copy. Not many products like to play nice with express. We only run 1 SQL server (express) for a small app, one thing I really like is the backup for that with cloudberry. My setup is as follows: servers / work stations do daily backups internally to their respective on-site backup server, each of those rsync to my main office / rack which then goes to AWS in one flavor or anything. Maybe if you're moving 100tb of data it might not be the best? This is just speculation. For the price, ease, and AWS integration, it works very well for me. I mostly rely on file backups instead of images so I can't speak to how well that works. I know everyone around here likes it but it just seems to be missing features and again the restore isn't that great. If you have large amounts of data their seeding feature (which again doesn't do true delta tracking) is kind of weak. Likely you would need to recreate your network connections as well. In response to the question if you can recovery into VMware or Hyper-V they say ,"Yes, that's possible" meaning you need to create VM's and mount the disk images, provision hardware and all of that. This means little to know support for restoring directly into a private or public cloud. They support restoring to an image but not actual virtual recovery onto an existing hypervisor, near as I can tell. This means that they must include the entire file (they call this incremental) in the backup set instead of just the changes made to specific files (They don't support true delta tracking.) This means larger backup sizes which is completely unnecessary. Here are some objective things to consider.Ĭloud Berry uses timestamps to determine if a file has been modified and not hashes.